I asked on Hacker News what the worst case scenario of AI “breaking loose”. This came after listening to Lex Fridman's interview with Max Tegmark [0], the author of the popular petition to stop AI development (specifically LLMs) for 6 months to allow policy and safety to catch up.

One commenter pointed me to an in-depth presentation by Center of Humane Technology discussing the dangers posed by AI especially large language models. It’s a great watch and I definitely recommend viewing it.

There’s too much here to cover in this presentation, so I’ll have to break it up into multiple parts. In this part, I’ll talk about the over-arching argument throughout the presentation and whats special about LLMs that has many freaking out.

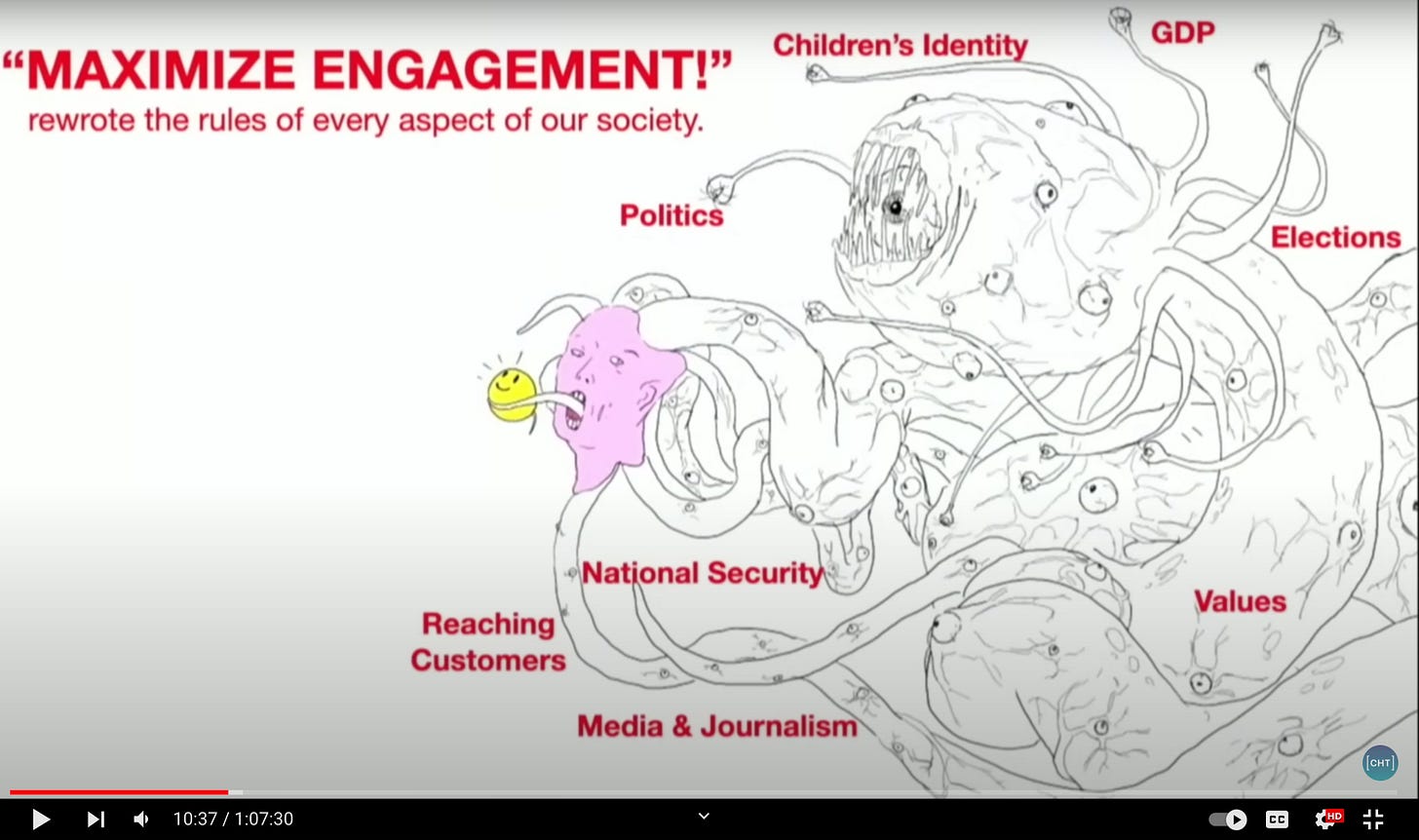

AI compared to social media

The crux of the argument relies on a comparison between social media and artificial intelligence. Social media is represented as a face with benefits being shoved down its throat. And the criticism around social media tends to focus on addiction, disinformation, mental health, polarization and censorship.

But what you don’t see is the real force driving social media:

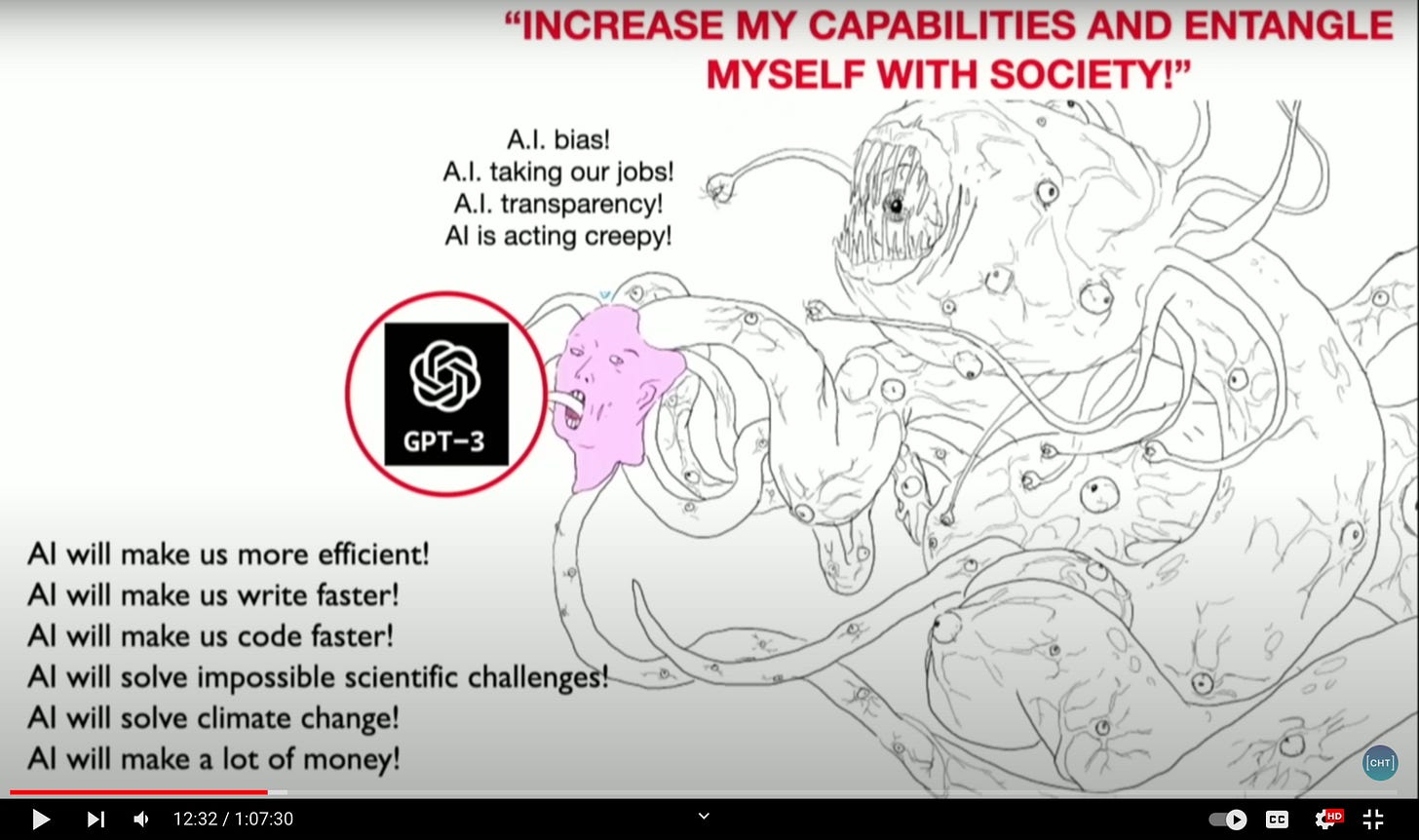

They paint the same comparison with GPT (a stand-in for modern large language models)

The monster behind AI is harder to pin down but they include things like

Reality collapse

Fake everything

Trust collapse

Automated loopholes in law / fake religions / cyberweapons / lobbying

Automated fake religions

Exponential blackmail / scams

A-Z testing of everything

Synthetic relationships

AlphaPersuade

I agree that the criticism of social media only scratches the surface of the issue or focuses too much on the symptoms. Is shadow-banning people on one side of the political spectrum really the worst thing about social media?

Social media has transformed so much of society in a worse way. Politics is worse than it’s been in my lifetime, children are more depressed and trust in institutions is eroding. And the benefits of “connecting people” fell flat as people are more isolated than pre-social media.

I also agree that the criticisms of GPT so far have been superficial, but so have the benefits. Sure its sometimes creepy and yes it’ll help me write my code faster. But it’s so much more than that.

Language as the ultimate medium

The presenters make a great point at highlighting the importance of the medium, namely language. If there has been any common trend in AI, it’s been a push to more end to end systems. The first chess engines were hand-coded. Then chess engines were given more leeway in determining strengths of relative positions. And the best chess engines today aren’t even programmed to know the rules of chess. They learn everything about the game from self play and a simple scoring metric (losing game and illegal moves bad).

We’re now seeing large language perform well across multiple disciplines, even better than systems built explicitly for those disciplines. For instance, GPT learned to answer in many languages even though answering questions in that language was never in its training set or explicitly rewarded.

Using universal game engines, one can achieve superhuman proficiency in chess and then apply the same level of expertise to excel in games like Go and Shogi. Similarly, with LLMs, we are witnessing a comparable progression where a single model can attain proficiency across various domains. This means that advancements in large language models will enhance all associated applications.

GPT-4 is multimodal, capable of processing both text and images, though this feature hasn’t been released. The logical progression would be to train a GPT-like model on video and audio, significantly expanding the training dataset. One can envision a physical robot that learns from real-time video, audio, and sensory input in the future.

In a few years, it's plausible that a large language model could surpass the performance of today's cutting-edge game engines. In AI, it’s usually wise to bet on the more general system.

In the next part I’ll discuss where the comparison between AI and social media breaks down.